It will show a textual representation of GPU and GPU Memory utilization along with details of each process running on both the GPUs of the node. # Load the cuda Environment ~]$ module load cuda/9.2

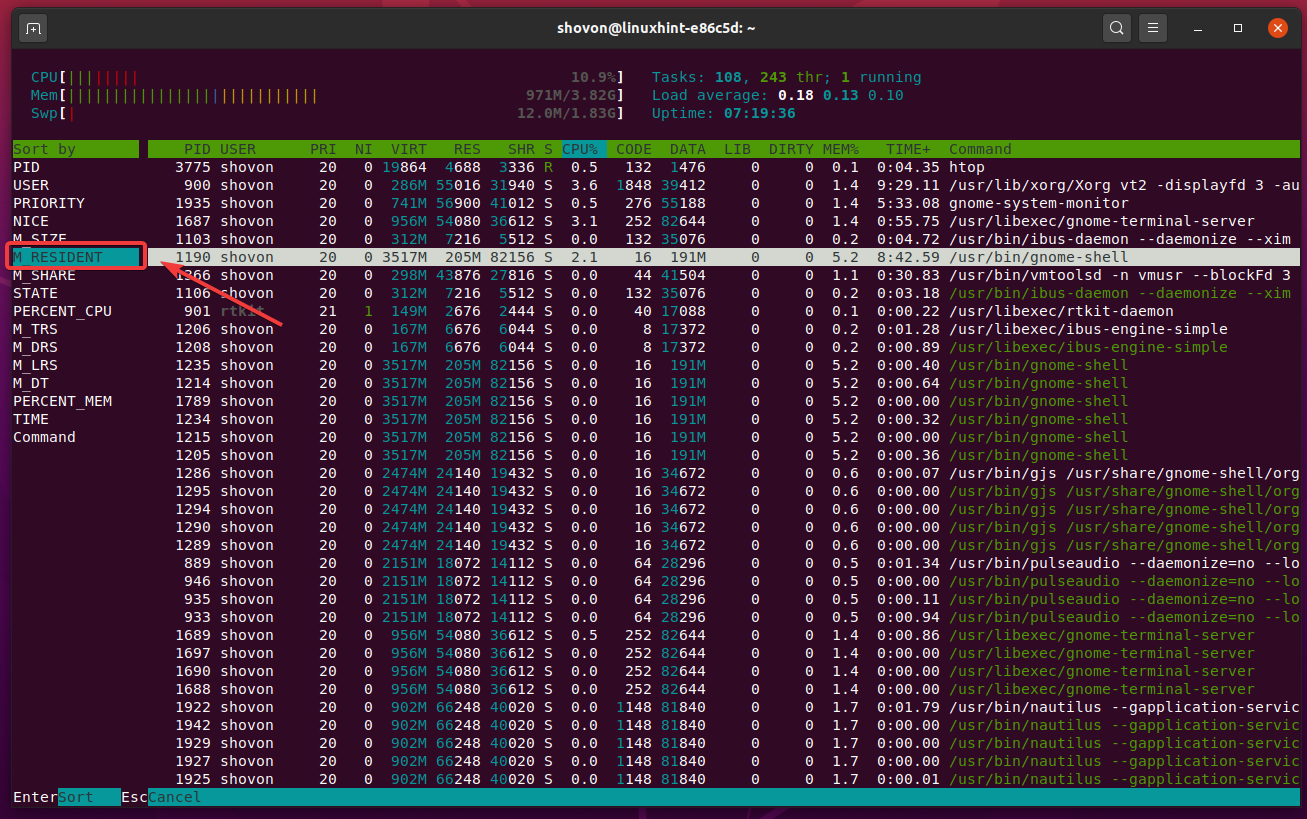

To check the utilization of GPU nodes, you can run nvidia-smi For more information about htop, see htop homepage It will show a graphical representation of CPU and Memory utilization along with details of each process. But I found msprint, a simple text-based tool shipped with Valgrind, to be of great help already. A great graphical tool for analyzing these files is massif-visualizer. # RUN ON THE COMPUTE NODE (STANDARD NODES) These provide, (1) a timeline of memory usage, (2) for each snapshot, a record of where in your program memory was allocated.

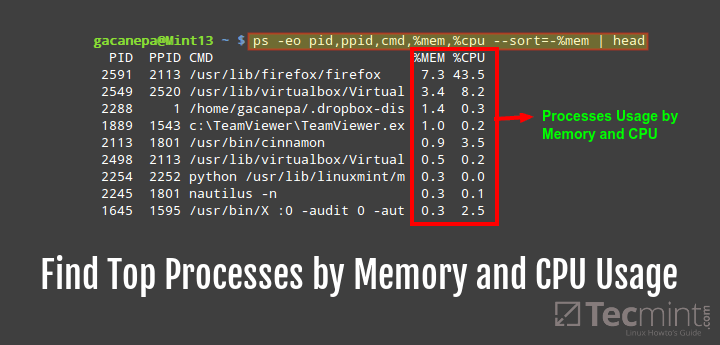

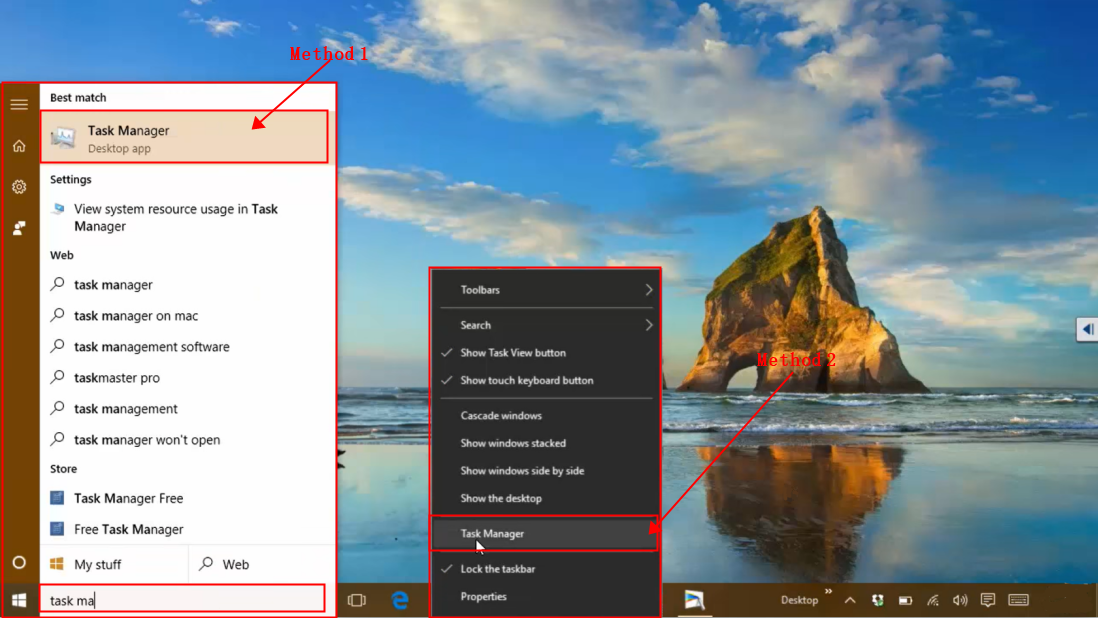

To check the utilization of standard nodes, you can run htop Note that the hostname in the prompt ~]$ ssh ~]$ You can monitor the overall performance of your system by looking at information such as Central Processing Unit (CPU) usage Physical memory usage Virtual. JOBID PARTITION NAME USER ST TIME NODES NODELIST(REASON) # Check Slurm job queue to find the allocated node ~]$ squeue -u $USER spent by the requests in queue and the time spent servicing them (Linux only). Now check the compute node name on which your Slurm job is running. Track system resource usage: CPU, memory, disk, filesystem, and more. Clicking any of these devices displays more detailed information on the right side of the window. On the Performance tab, a list of hardware devices is displayed on the left side. In the window that appears, click the Performance tab. # Copy the public key into list of authorized keysĬat ~/.ssh/id_ecdsa.pub > ~/.ssh/authorized_keys Using the Task Manager Press the Windows key, type task manager, and press Enter. # This saves the key pair at the default location and doesn't set passphrase. # Press Enter 3 times for the prompts to accept the default values. You can do this only for the compute nodes on which your Slurm job is currently running.īefore using the SSH command on the login node, you should generate a new SSH key pair on the login node on Kay and add it to your authorized keys on Kay. To check the utilization of compute nodes, you can SSH to it from any login node and then run commands such as htop and nvidia-smi. You can check the utilization of the compute nodes to use Kay efficiently and to identify some common mistakes in the Slurm submission scripts.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed